Introduction

As businesses increasingly digitize operations, vast amounts of transactional data, such as sales receipts, are often stored as scanned images or photos. Extracting meaningful, structured information from these image-based documents can support a variety of analytical and operational workflows, such as sales tracking, sales management, and customer insights.

Recent advancements in large language models (LLMs) have opened new possibilities for interpreting and extracting information from such inputs with greater accuracy and flexibility. The GeoAI toolbox in ArcGIS Pro supports integration of third-party language models, allowing users to process and analyze text using external AI services. Custom third-party models can be wrapped in ESRI Deep Learning Package (.dlpk) files and used within GeoAI tools and the arcgis.learn API. In this sample, we demonstrate how one such model, GPT-4o from OpenAI, can be used with the Process Text Using AI Model tool to extract relevant entities from receipt images. Third-party model support in ArcGIS Pro and the arcgis.learn API allows users to bring in AI models (whether hosted by providers like OpenAI, Azure, etc., built from open-source code, or fine-tuned for a specific task) to enhance natural language processing directly within ArcGIS workflows.

For this use case, we use a set of sales receipt images and perform entity extraction to identify key pieces of information. The extracted entities can be used for downstream tasks like sales analytics, customer profiling, inventory management, or tax auditing. The model extracts the following entities:

- Sale Date

- Customer Name

- Product

- Quantity

- Unit Price

- Total Amount

- Tax Rate

- Payment Method

This use case highlights how advanced AI models can streamline the transformation of unstructured receipt data into structured, analysis-ready datasets within the ArcGIS ecosystem.

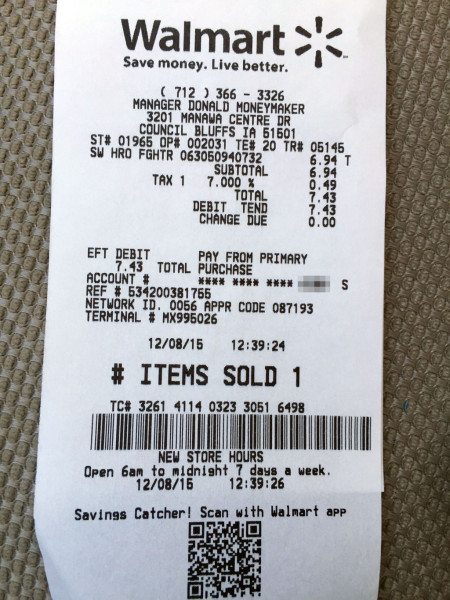

Dataset

For this use case, we use a sample set of images of printed sales receipts from various retail outlets like Walmart, Costco Wholesale, etc.

Capability demonstration

- Out-of-the-box use of pre-trained model

- Minimal or no annotation required

- Comparable accuracy

Create the third-party deep learning package

The first step in using a third-party language model is to prepare a Deep Learning Package file (.dlpk). This package includes your custom NLP Python function to interact with external models, along with an Esri Model Definition (.emd) file. In this use case, we use a third-party hosted model**(GPT-4o)** to extract key entities from scanned sales receipt images. Please note that if you use a web-hosted LLM, the data processed will be sent to the LLM provider. Use these models only if you trust their source.

Components of the third-party deep learning package (.dlpk)

- An Esri Model Definition file (

LLMEntityExtractor.emd). - A custom Python NLP function (e.g.,

MyLLMEntityExtractor.py) that defines how to interact with the GPT-4o API and parse the results.

The first component of a third-party Deep Learning Package (.dlpk) is an Esri Model Definition (.emd) file. Let's create the .emd file:

Esri model definition (.emd) file

The Esri Model Definition (.emd) file contains both required and optional keys necessary for executing the model. A valid .emd file for use with the Process Text Using AI Model tool must include the following essential keys:

InferenceFunction: Specifies the name of the module that defines the custom NLP function.ModelType: Indicates the type of task being performed.

Additional keys may be included at the discretion of the model extension author. In this case, we’ve added the system prompt name under the key sys_prompt.

{

"InferenceFunction": "MyLLMEntityExtractor.py",

"ModelType": "LLM",

"sys_prompt": """Extract the following fields from each sentence:

Sale Date

Customer Name

Product

Quantity

Unit Price

Total Amount

Tax Rate

Payment Method

Output the result in JSON format for each sentence.

Example Sentence:

"Today, Diana Fisher acquired 6 Monitor(s) at Traders joe, Greenvil ave, paying a total of 104580 INR. The payment was made via Cash."

Expected Output:

{

"Sale Date": "10-04-2024",

"Customer Name": "Diana Fisher",

"Products": "Monitor",

"Total Amount": 104580,

"Payment Method": "Cash",

"Location": "Traders joe, Greenvil ave"

"Store Name": "Traders joe",

}"""

}Once the .emd file is created, the next step is to create a custom Python NLP function.

Custom Python NLP function

To integrate with ArcGIS Pro, the model logic must be encapsulated in a Python class that implements the following required methods:

__init__getParameterInfoinitializegetConfigurationpredict

We'll now create a custom NLP function to extract the following entities from scanned sales receipt images:

- Sale Date

- Customer Name

- Product

- Quantity

- Unit Price

- Total Amount

- Tax Rate

- Payment Method

To accomplish this, we’ll use the pre-trained GPT-4o large language model from OpenAI. The input will consist of images of printed sales receipts containing the relevant information, along with a well-defined text prompt to the LLM. The model will return structured data for the above specified entities.

Imports

import arcpy

import json

import base64

from arcgis.features import FeatureSet

import keyring

from arcgis.learn import AIServiceConnection

Define the __init__ function

The __init__ method initializes instance variables like name, description, and other attributes essential for the NLP function.

def __init__(self, **kwargs):

self.name = "GPT-based NER for Sales"

self.description = "Named Entity Recognition using GPT for extracting sales receipt information from the scanned images" Define initialize function

The initialize method is called at the start of the custom Python NLP function. Within this function, we will set up the necessary variables. It accepts two parameters via kwargs:

Parameters in kwargs

model: The path to the ESRI Model Definition (.emd) file.device: The name of the device (either GPU or CPU). This is particularly important for on-premises models.

The initialize method reads the ESRI Model Definition (.emd) file and configures the essential variables needed for inference. This method is called only once, making it the ideal place to load any resources or dependencies required throughout the inference process.

def initialize(self, **kwargs):

json_file = kwargs['model']

with open(json_file, 'r') as f:

self.json_info = json.load(f)

# access the predefined system propmpt from the EMD file

self.SYSTEM_PROMPT = json_info['sys_prompt']Define the getParameterInfo function

The getParameterInfo function defines the parameters that will be exposed in the GeoAI tool, including their data types and allowable values. For our use case, it gathers the text prompt used to instruct the language model on which entities to extract from the input receipt text, as well as the connection file required to authenticate with the third-party model provider. Refer to the getParameterInfo section to get a detailed explanation of the getParameterInfo function.

def getParameterInfo(self, **kwargs):

params = [

{

"name": "prompt",

"dataType": "string",

"required": True,

"displayName": "Prompt",

"description": "Prompt",

"value": """Extract the defined entities from the input text."""

},

{

"name": "ai_connection_file",

"dataType": "string",

"required": True,

"displayName": "Path of the connection file",

"description": "Path of the connection file",

"value": "",

},

]

return paramsReturns:

The method returns a list of dictionaries, each representing a parameter required by the custom NLP model.

Each dictionary contains the following key attributes:

name: A string identifier for the parameter.dataType: The type of data the parameter accepts (e.g.,string,boolean,list).value: The default value for the parameter.required: A boolean indicating whether the parameter is required.displayName: A user-friendly name shown in the GeoAI tool interface.domain: (Optional) A set of allowed values for the parameter, if applicable.description: A detailed explanation of what the parameter does and how it is used.

Define the getConfiguration function

The getConfiguration method sets up and manages the parameters required by the NLP function. It receives keyword arguments (kwargs) that contain the values provided by the user—either through the GeoAI tool interface or programmatically in the getParameterInfo function.

This method is responsible for:

- Extracting input from the tool (e.g., prompt, AI connection file path).

- Storing these values in class-level variables or a configuration dictionary.

- Controlling how the model processes the input and generates the output based on the updated parameters.

By configuring these parameters, the method ensures that the function runs with the correct context and settings during inference.

For our use case, we are using a large language model (LLM) provided by an external AI service, GPT-4o from OpenAI. To enable communication between our custom Python NLP function and the OpenAI endpoint, we need a connection file. You can refer to the Create AI Service Connection File section for instructions on how to create one.

This connection file securely stores the required credentials that will be retrieved and used to authenticate and initialize the third-party model. These saved credentials will be accessed from the connection file and set within the current class context in the following function.

def getConfiguration(self, **kwargs):

# Get ai_connection_file parameter value

connection_file_path = kwargs.get("ai_connection_file", None)

# Read the connection file using AIServiceConnection class inside arcgis.learn

from arcgis.learn import AIServiceConnection

con = AIServiceConnection(ais_filename)

conn_param_v = con.get_dict()

self.API_KEY = conn_param_v["authenticationSecrets"]["token"]

self.model = conn_param_v["serviceProviderProperties"]["model"]

# Get prompt parameter value

self.prompt_txt = kwargs.get("prompt", "")

self.prompt = f"{self.SYSTEM_PROMPT}\n\n{self.prompt_txt}"

return kwargsDefine the predict function

The predict method performs inference, that is, it generates predictions with the NLP model. This method is passed a FeatureSet containing the input features (or rows in the case of a table) and kwargs containing the field name which contains the input strings. This method returns the results in the form of a FeatureSet object.

def predict(self, feature_set, **kwargs):

openai.api_key = self.API_KEY

field = kwargs["input_field"][0]

image_list = feature_set.df[field].to_list()

payload = []

features_list = []

for image in image_list:

base64_image = encode_image(image)

url = f"data:image/jpg;base64,{base64_image}"

payload = {

"messages": [

{"role": "system", "content": self.prompt},

{

"role": "user",

"content": [

{

"type": "image_url",

"image_url": {

"url": f"data:image/jpeg;base64,{base64_image}"

},

},

],

},

],

"temperature": 0.2,

"response_format" : { "type": "json_object" }

}

payload = json.dumps(payload)

response = openai.ChatCompletion.create(

model=self.model,

messages=payload["messages"],

temperature=0.2

)

output = response.json()["choices"][0]["message"]["content"]

output = json.loads(output)

output = {k:v if not isinstance(v, list) else str(v) for k,v in output.items() }

attribute_dict = {"inputpath": image}

attribute_dict.update(output)

features_list.append(

{

"attributes": attribute_dict

}

)

all_fields = []

for field in entities_to_extract:

all_fields.append({"name": f"{field}", "type": "esriFieldTypeString"})

all_fields.append({"name": "inputpath", "type": "esriFieldTypeString"})

feature_dict = {

"fields": all_fields,

"geometryType": "",

"features": features_list,

}

return FeatureSet.from_dict(feature_dict)Create AI service connection file

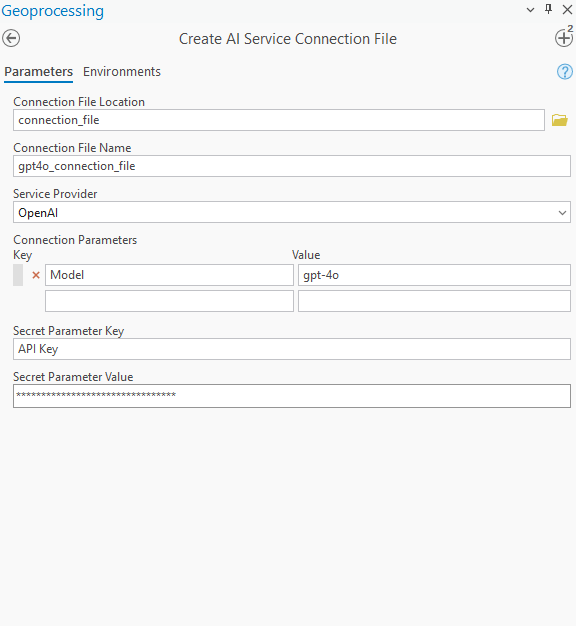

To establish a connection between the Python NLP function and the hosted AI service, a connection file is required. This file can be created using the Create AI Service Connection File tool, located in the Data Management toolbox under the General toolset. The tool securely stores access credentials and configuration settings in an encoded .ais file, eliminating the need for repeated manual entry. It supports multiple cloud-based AI providers and simplifies authentication across tasks while minimizing the risk of errors. For more details, refer to the Create AI Service Connection File tool documentation.

Let’s create a connection file for GPT-4o provided by OpenAI, along with a few other required configurations for our use case.

Once the tool finishes its execution, it will save the connection file in the specified location with the provided name.

Create the deep learning package

Organize the files

Follow these steps to prepare the .dlpk package:

-

Create a folder:

Create a folder and include the custom NLP function file (e.g.,LLMEntityExtractor.py) and the Esri.emdfile (e.g.,LLMEntityExtractor.emd).

The name of the folder must match the name of the.emdfile.Example folder structure:

LLMEntityExtractor/

├── MyLLMEntityExtractor.py

└── LLMEntityExtractor.emd

- Zip the folder:

Compress the files into a ZIP archive.

Rename the .zip file to match the .emd file name, but with the .dlpk extension.

Example final file name:

LLMEntityExtractor.dlpk

This .dlpk file is now ready for use with the Process Text Using AI Model tool inside ArcGIS Pro.

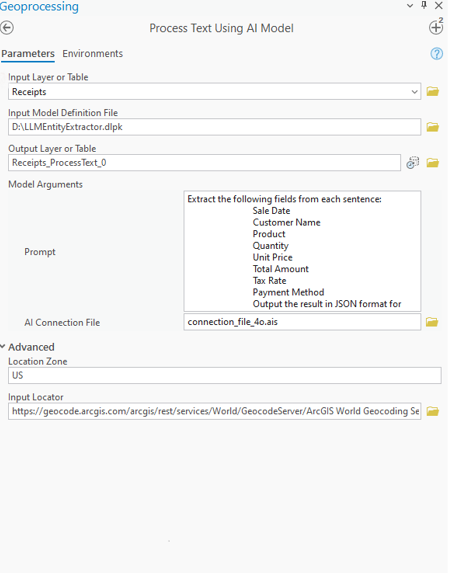

Run the Process Text Using AI Model tool

Run the tool with the desired parameters:

- Input Layer or Table – The folder containing the input images. →

Receipts - Input Model Definition File – The deep learning package (.dplk) defining the entity extraction. →

LLMEntityExtractor.dplk - Output Layer or Table – The table name where extracted results will be saved. →

Receipts_ProcessText_0 - Model Arguments -

- Prompt – Instructions to the model specifying which fields to extract from text. →

Extract the following fields from each sentence: Sale Date, Customer Name, Product, Quantity, Unit Price, Total Amount, Tax Rate, Payment Method. Output the result in JSON format for each record - AI Connection File – Configuration file for connecting to the AI/LLM model server. →

connection_file_4o.ais

- Prompt – Instructions to the model specifying which fields to extract from text. →

- Location Zone – Regional setting to guide formatting or context, e.g., date formats. →

US - Input Locator – Geocoding service to interpret or normalize addresses. →

Locator

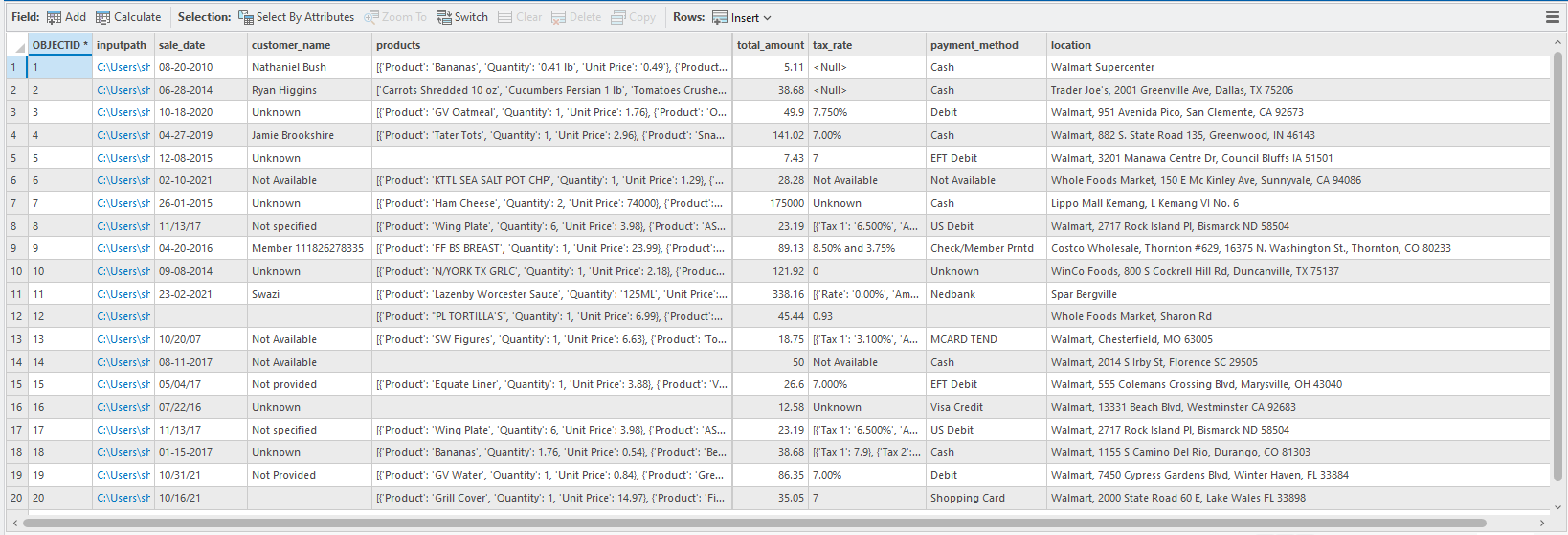

Results

After running the tool here are the extracted results:

You can choose to geocode the location on to the map

Conclusion

This guide demonstrates how powerful AI models like GPT-4o can be seamlessly integrated into ArcGIS Pro through the Process text Using AI Model tool to extract structured information from unstructured receipt images. By using the Process Text Using AI Model tool and a custom LLM wrapped in a Deep Learning Package (.dlpk), users can automate the extraction of key entities like sale date, customer name, product details, and payment method.

This approach reduces the need for manual data entry or annotation, while delivering high-quality, analysis-ready outputs that can support a range of GIS and business workflows like sales analytics, inventory tracking, and customer insights. With built-in support for third-party AI services, ArcGIS Pro enables users to bring the latest in NLP and machine learning directly into their geospatial data pipelines.

References

- To learn more about how to create and integrate third-party models, refer to Use third party language models with ArcGIS.

- To learn more about the connection tool, Refer to Create AI Service Connection File.