The arcgis.geoanalytics.manage_data submodule contains functions that are used for the day-to-day management of geographic and tabular data.

This toolset uses distributed processing to complete analytics on your GeoAnalytics Server.

Tool |

Description |

|---|---|

append_data |

The Append Data tool allows you to append features to an existing hosted layer in your ArcGIS Enterprise organization. Append Data allows you to update or modify existing datasets. |

calculate_fields |

The Calculate Field tool calculates field values on a new or existing field. The output will always be a new layer in your ArcGIS Enterprise portal contents. |

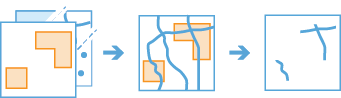

clip_layer |

The Clip Layer tool allows you to create subsets of your input features by clipping them to areas of interest. The output subset layer will be available in your ArcGIS Enterprise organization. |

copy_to_data_store |

The Copy To Data Store tool is a convenient way to copy datasets to a layer in your portal. Copy to Data Store creates an item in your content containing your layer. |

dissolve_boundaries |

The Dissolve Boundaries tool merges area features that either intersect or have the same field values. |

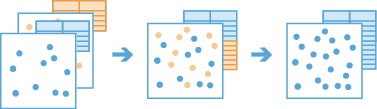

merge_layers |

The Merge Layers tool combines two feature layers to create a single output layer. The tool requires that both layers have the same geometry type (tabular, point, line, or polygon). I |

overlay_data |

The Overlay Layers tool combines two layers into a single layer using one of five methods: Intersect, Erase, Union, Identity, or Symmetric Difference. |

run_python_script |

The run python script tool executes a Python script directly in an ArcGIS GeoAnalytics server site . |

Note: The purpose of the notebook is to show examples of the different tools that can be run on an example dataset.

Necessary imports

# connect to Enterprise GIS

from arcgis.gis import GIS

import arcgis.geoanalytics

portal_gis = GIS("your_enterprise_profile")item = portal_gis.content.get('5c6ef8ef57934990b543708f815d606e')

usa_counties_lyr = item.layers[0]usa_counties_lyr<FeatureLayer url:"https://pythonapi.playground.esri.com/server/rest/services/Hosted/usaCounties/FeatureServer/0">

search_result = portal_gis.content.search("", item_type = "big data file share")

search_result[<Item title:"bigDataFileShares_hurricanes_dask_shp" type:Big Data File Share owner:atma.mani>, <Item title:"bigDataFileShares_NYC_taxi_data15" type:Big Data File Share owner:api_data_owner>, <Item title:"bigDataFileShares_hurricanes_dask_csv" type:Big Data File Share owner:atma.mani>, <Item title:"bigDataFileShares_all_hurricanes" type:Big Data File Share owner:api_data_owner>, <Item title:"bigDataFileShares_ServiceCallsOrleans" type:Big Data File Share owner:portaladmin>, <Item title:"bigDataFileShares_ServiceCallsOrleansTest" type:Big Data File Share owner:arcgis_python>, <Item title:"bigDataFileShares_calls" type:Big Data File Share owner:api_data_owner>, <Item title:"bigDataFileShares_Samples_Data" type:Big Data File Share owner:api_data_owner>, <Item title:"bigDataFileShares_GA_Data" type:Big Data File Share owner:arcgis_python>, <Item title:"bigDataFileShares_GA_Data" type:Big Data File Share owner:api_data_owner>]

air_lyr = search_result[-2].layers[0]air_lyr<Layer url:"https://pythonapi.playground.esri.com/ga/rest/services/DataStoreCatalogs/bigDataFileShares_GA_Data/BigDataCatalogServer/air_quality">

Append Data

The Append Data tool allows you to append features to an existing hosted layer in your ArcGIS Enterprise organization and update or modify existing datasets.

The append_data operation appends tabular, point, line, or polygon data to an existing layer. The input layer must be a hosted feature layer. The tool will add the appended data as rows to the input layer. No new output layer will be created.

from arcgis.geoanalytics.manage_data import append_datainput_lyr = portal_gis.content.get('b39deed705144a2a90c5eaf5a44f5a14').layers[0]

append_lyr = portal_gis.content.get('78d4b1914bba4e09b9e8006fa6a3157c').layers[0]append_data(input_layer=input_lyr, append_layer=append_lyr)Attaching log redirect

Log level set to DEBUG

{"messageCode":"BD_101117","message":"No field mapping specified. Default field mapping will be applied."}

{"messageCode":"BD_101125","message":"The following fields have not been appended: INSTANT_DATETIME, globalid, OBJECTID.","params":{"fieldNames":"INSTANT_DATETIME, globalid, OBJECTID"}}

{"messageCode":"BD_101124","message":"The following fields are missing from the append layer and field mapping: pres_wmo_, Wind, size, latitude, pres_wmo1, iso_time, longitude, wind_wmo_, wind_wmo1.","params":{"missingFields":"pres_wmo_, Wind, size, latitude, pres_wmo1, iso_time, longitude, wind_wmo_, wind_wmo1"}}

{"messageCode":"BD_101132","message":"The following append layer fields have not been appended due to a field name mismatch: Field1, longitude_, pressure_m, ISO_time_s, latitude_m, Current_Ba, eye_dia_mi, wind_knots.","params":{"mismatchedFields":"Field1, longitude_, pressure_m, ISO_time_s, latitude_m, Current_Ba, eye_dia_mi, wind_knots"}}

Detaching log redirect

{"messageCode":"BD_101051","message":"Possible issues were found while reading 'appendLayer'.","params":{"paramName":"appendLayer"}}

{"messageCode":"BD_101054","message":"Some records have either missing or invalid geometries."}

True

Calculate Fields

The calculate_fields operation works with a layer to create and populate a new field or edit an existing field. The output is a new feature service that is the same as the input features, but with the newly calculated values.

from arcgis.geoanalytics.manage_data import calculate_fieldscalculate_fields(input_layer=hurr2,

field_name="avg",

data_type="Double",

expression='max($feature["wind_wmo1"],$feature["pres_wmo1"])')Attaching log redirect Log level set to DEBUG Detaching log redirect

Clip Layer

The clip_layer tool allows you to create subsets of your input features by clipping them to areas of interest. The output subset layer will be available in your ArcGIS Enterprise organization.

from arcgis.geoanalytics.manage_data import clip_layer

from datetime import datetime as dtsearch_result = portal_gis.content.search("bigDataFileShares_ServiceCallsOrleans", item_type = "big data file share")[0]

search_resultsearch_result.layers[<Layer url:"https://pythonapi.playground.esri.com/ga/rest/services/DataStoreCatalogs/bigDataFileShares_ServiceCallsOrleans/BigDataCatalogServer/yearly_calls">]

calls = search_result.layers[0]block_grp_item = portal_gis.content.get('9975b4dd3ca24d4bbe6177b85f9da7bb')

blk_grp_lyr = block_grp_item.layers[0]blk_grp_lyr.query(as_df=True)| fid | statefp | countyfp | tractce | blkgrpce | geoid | namelsad | mtfcc | funcstat | aland | awater | intptlat | intptlon | globalid | OBJECTID | SHAPE | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | 2022 | 22 | 071 | 004500 | 1 | 220710045001 | Block Group 1 | G5030 | S | 173150.0 | 0.0 | +29.9715423 | -090.0838705 | {E8B3C635-D935-62F7-168F-64EDC2BED042} | 1 | {"rings": [[[-90.08673061729473, 29.9701864174... |

| 1 | 2209 | 22 | 071 | 013500 | 3 | 220710135003 | Block Group 3 | G5030 | S | 214346.0 | 0.0 | +29.9573925 | -090.0664905 | {C253C889-C96C-6493-7DD3-1F0CB06A74BB} | 2 | {"rings": [[[-90.06992661254384, 29.9589464152... |

| 2 | 2300 | 22 | 071 | 001722 | 2 | 220710017222 | Block Group 2 | G5030 | S | 290122.0 | 0.0 | +30.0167107 | -089.9915777 | {7D29E3F0-9C17-BC5D-86E9-2F604B7B6682} | 3 | {"rings": [[[-89.99454259416906, 30.0191794304... |

| 3 | 2322 | 22 | 071 | 002200 | 2 | 220710022002 | Block Group 2 | G5030 | S | 119731.0 | 0.0 | +29.9804678 | -090.0482377 | {5A369F6C-B416-ED2A-EEF9-AE2AC8A13BE9} | 4 | {"rings": [[[-90.04979560782573, 29.9822134209... |

| 4 | 2792 | 22 | 071 | 009600 | 3 | 220710096003 | Block Group 3 | G5030 | S | 98006.0 | 0.0 | +29.9195453 | -090.0922712 | {C3B67E6D-30B1-9C16-1E93-27A02A7869A5} | 5 | {"rings": [[[-90.09409861704567, 29.9214634062... |

| ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... |

| 531 | 170 | 22 | 071 | 003000 | 2 | 220710030002 | Block Group 2 | G5030 | S | 190414.0 | 0.0 | +29.9798908 | -090.0617835 | {F6A152A8-6629-7E38-E0EB-6C20D43EB4F5} | 799 | {"rings": [[[-90.06492061151569, 29.9813114200... |

| 532 | 155 | 22 | 071 | 002501 | 4 | 220710025014 | Block Group 4 | G5030 | S | 216634.0 | 0.0 | +30.0143991 | -090.0571656 | {E0880AD9-1B54-18C1-830B-3FBA14395285} | 820 | {"rings": [[[-90.06078861197408, 30.0155984278... |

| 533 | 2660 | 22 | 071 | 012600 | 1 | 220710126001 | Block Group 1 | G5030 | S | 179117.0 | 0.0 | +29.9445416 | -090.1295354 | {64CA7D7F-FBA7-2614-D997-6D55B9D65DF8} | 829 | {"rings": [[[-90.13190562891597, 29.9451464105... |

| 534 | 2161 | 22 | 071 | 001800 | 1 | 220710018001 | Block Group 1 | G5030 | S | 211600.0 | 0.0 | +29.9673921 | -090.0537128 | {16764B95-5EEE-DCD9-21D1-814A00C6A7FE} | 859 | {"rings": [[[-90.05698460887318, 29.9687144176... |

| 535 | 2841 | 22 | 071 | 012102 | 1 | 220710121021 | Block Group 1 | G5030 | S | 349132.0 | 0.0 | +29.9410166 | -090.1210713 | {C809D6D7-C465-2780-AA58-568B66AD4521} | 889 | {"rings": [[[-90.12515062661612, 29.9422734098... |

536 rows × 16 columns

clip_result = clip_layer(calls, blk_grp_lyr, output_name="service calls in new Orleans" + str(dt.now().microsecond))Copy To Datastore

The Copy To Data Store tool is a convenient way to copy datasets to a layer in your portal. Copy to Data Store creates an item in your content containing your copied dataset layer.

from arcgis.geoanalytics.manage_data import copy_to_data_storecopy_to_data_store(hurr2)Attaching log redirect Log level set to DEBUG Detaching log redirect

copy_to_data_store(hurr1)Attaching log redirect

Log level set to DEBUG

Detaching log redirect

{"messageCode":"BD_101051","message":"Possible issues were found while reading 'inputLayer'.","params":{"paramName":"inputLayer"}}

{"messageCode":"BD_101054","message":"Some records have either missing or invalid geometries."}

Disolve Boundaries

The Dissolve Boundaries tool merges area features that either intersect or have the same field values.

from arcgis.geoanalytics.manage_data import dissolve_boundariesdissolve_boundaries(input_layer=blk_grp_lyr,

dissolve_fields='countyfp',

output_name='dissolved by countyfp')Attaching log redirect Log level set to DEBUG Detaching log redirect

Merge Layers

The Merge Layers tool combines two feature layers to create a single output layer. The tool requires that both layers have the same geometry type (tabular, point, line, or polygon). If time is enabled on one layer, the other layer must also be time enabled and have the same time type (instant or interval). The result will always contain all fields from the input layer. All fields from the merge layer will be included by default, or you can specify custom merge rules to define the resulting schema.

from arcgis.geoanalytics.manage_data import merge_layerssearch_result = portal_gis.content.search("", item_type = "big data file share")

search_result[<Item title:"bigDataFileShares_hurricanes_dask_shp" type:Big Data File Share owner:atma.mani>, <Item title:"bigDataFileShares_NYC_taxi_data15" type:Big Data File Share owner:api_data_owner>, <Item title:"bigDataFileShares_hurricanes_dask_csv" type:Big Data File Share owner:atma.mani>, <Item title:"bigDataFileShares_all_hurricanes" type:Big Data File Share owner:api_data_owner>, <Item title:"bigDataFileShares_ServiceCallsOrleans" type:Big Data File Share owner:portaladmin>, <Item title:"bigDataFileShares_ServiceCallsOrleansTest" type:Big Data File Share owner:arcgis_python>, <Item title:"bigDataFileShares_GA_Data" type:Big Data File Share owner:arcgis_python>, <Item title:"bigDataFileShares_GA_Data" type:Big Data File Share owner:arcgis_python>, <Item title:"bigDataFileShares_Chicago_Crimes" type:Big Data File Share owner:arcgis_python>, <Item title:"bigDataFileShares_csv_table" type:Big Data File Share owner:arcgis_python>]

hurr1 = search_result[0].layers[0]

hurr2 = search_result[3].layers[0]merge_layers(hurr1, hurr2, output_name='merged layers')Attaching log redirect

Log level set to DEBUG

Detaching log redirect

{"messageCode":"BD_101051","message":"Possible issues were found while reading 'inputLayer'.","params":{"paramName":"inputLayer"}}

{"messageCode":"BD_101054","message":"Some records have either missing or invalid geometries."}

Overlay Data

The Overlay Layers tool combines two layers into a single layer using one of five methods: Intersect, Erase, Union, Identity, or Symmetric Difference.

from arcgis.geoanalytics.manage_data import overlay_dataoverlay_data(calls, blk_grp_lyr, output_name='intersected features')Run Python Script

The run_python_script method executes a Python script directly in an ArcGIS GeoAnalytics server site . The script can create an analysis pipeline by chaining together multiple GeoAnalytics tools without writing intermediate results to a data store. The tool can also distribute Python functionality across the GeoAnalytics server site.

Geoanalytics Server installs a Python 3.6 environment that this tool uses. The environment includes Spark 2.2.0, the compute platform that distributes analysis across multiple cores of one or more machines in your GeoAnalytics Server site. The environment includes the pyspark module that provides a collection of distributed analysis tools for data management, clustering, regression, and more. The run_python_script task automatically imports the pyspark module so you can directly interact with it.

When using the geoanalytics and pyspark packages, most functions return analysis results as Spark DataFrame memory structures. You can write these data frames to a data store or process them in a script. This lets you chain multiple geoanalytics and pyspark tools while only writing out the final result, eliminating the need to create any bulky intermediate result layers.

from arcgis.geoanalytics.manage_data import run_python_scriptThe function below filters the data by rows that give information about the PM2.5 pollutant. To find the average PM2.5 value of each county, we will use the join_features tool. Finally, we will write the output to the datastore.

def average():

df = layers[0]

df = df.filter(df['Parameter Name'] == 'PM2.5 - Local Conditions') #pyspark filter

res = geoanalytics.join_features(target_layer=layers[1],

join_layer=df,

join_operation="JoinOneToOne",

summary_fields=[{'statisticType' : 'mean', 'onStatisticField' : 'Sample Measurement'}],

spatial_relationship='Contains')

res.write.format("webgis").save("average_pm_by_county")run_python_script(average, [air_lyr, usa_counties_lyr])[{'type': 'esriJobMessageTypeInformative',

'description': 'Executing (RunPythonScript): RunPythonScript "def average():\n df = layers[0]\n df = df.filter(df[\'Parameter Name\'] == \'PM2.5 - Local Conditions\') #pyspark filter\n res = geoanalytics.join_features(target_layer=layers[1], \n join_layer=df, \n join_operation="JoinOneToOne",\n summary_fields=[{\'statisticType\' : \'mean\', \'onStatisticField\' : \'Sample Measurement\'}],\n spatial_relationship=\'Contains\')\n res.write.format("webgis").save("average_pm_by_county")\n\naverage()" https://pythonapi.playground.esri.com/ga/rest/services/DataStoreCatalogs/bigDataFileShares_GA_Data/BigDataCatalogServer/air_quality;https://pythonapi.playground.esri.com/server/rest/services/Hosted/usaCounties/FeatureServer/0 "{"defaultAggregationStyles": false}"'},

{'type': 'esriJobMessageTypeInformative',

'description': 'Start Time: Wed Jun 30 07:44:34 2021'},

{'type': 'esriJobMessageTypeWarning',

'description': 'Attaching log redirect'},

{'type': 'esriJobMessageTypeWarning',

'description': 'Log level set to DEBUG'},

{'type': 'esriJobMessageTypeInformative',

'description': '[DEBUG|07:44:35] Current Service Environment: GPServiceEnvironment(j5b2aab719ff84419839561c5a6fa9ee9,/gisdata/arcgisserver/directories/arcgisjobs/system/geoanalyticstools_gpserver/j5b2aab719ff84419839561c5a6fa9ee9,Some(GPServiceRequest(Some(wqOe_bYgjcaMUDm0EbI1DovAnWq3vca9QZag3aT6irmklGcUzQSqJPAM1JSyugDLmWK0uH_I4pU4qnrc4-8emj_YLpimUq1-RLRL3k6PkRuQAtzrkg_QVILHkSFkFDSt),None,Some(http))),System/GeoAnalyticsTools,GPServer,Map(jobsVirtualDirectory -> /rest/directories/arcgisjobs, jobsDirectory -> /gisdata/arcgisserver/directories/arcgisjobs, virtualOutputDir -> /rest/directories/arcgisoutput, showMessages -> Info, outputDir -> /gisdata/arcgisserver/directories/arcgisoutput, javaHeapSize -> 2048, maximumRecords -> 1000, toolbox -> ${AGSSERVER}/ArcToolBox/Services/GeoAnalytics Tools.tbx, executionType -> Asynchronous, renewTokens -> true, _debugModeEnabled -> true))'},

{'type': 'esriJobMessageTypeInformative',

'description': '[DEBUG|07:44:35] Running on 172.31.22.24'},

{'type': 'esriJobMessageTypeInformative',

'description': '[DEBUG|07:44:35] Found python binary in AGS system properties: /home/arcgis/anaconda3/envs/ga2/bin/python'},

{'type': 'esriJobMessageTypeInformative',

'description': '[DEBUG|07:44:35] Computed Spark resources in 102ms'},

{'type': 'esriJobMessageTypeInformative',

'description': '[DEBUG|07:44:35] Acquiring GAContext'},

{'type': 'esriJobMessageTypeInformative',

'description': '[DEBUG|07:44:35] Cached context is null, creating'},

{'type': 'esriJobMessageTypeInformative',

'description': '[DEBUG|07:44:35] Creating new context'},

{'type': 'esriJobMessageTypeInformative',

'description': '[DEBUG|07:44:35] Destroying any running contexts'},

{'type': 'esriJobMessageTypeInformative',

'description': '[DEBUG|07:44:35] Adding authentication info to SparkContext'},

{'type': 'esriJobMessageTypeInformative',

'description': '[DEBUG|07:44:35] \r\n spark.cores.max = 6,\r\n spark.executor.memory = 25100m\n spark.dynamicAllocation.minExecutors = None\n spark.authenticate = Some(true)\n spark.ui.enabled = None\n spark.fileserver.port = Some(56540)\n spark.driver.port = Some(56541)\n spark.executor.port = Some(56542)\n spark.blockManager.port = Some(56543)\n '},

{'type': 'esriJobMessageTypeInformative',

'description': '[DEBUG|07:44:37] Initialized Spark 2.4.4'},

{'type': 'esriJobMessageTypeInformative',

'description': '[DEBUG|07:44:37] Attaching progress listener to SparkContext'},

{'type': 'esriJobMessageTypeInformative',

'description': "[DEBUG|07:44:37] Using status file '/gisdata/arcgisserver/directories/arcgisjobs/system/geoanalyticstools_gpserver/j5b2aab719ff84419839561c5a6fa9ee9/status.dat' to track cancel"},

{'type': 'esriJobMessageTypeInformative',

'description': "[DEBUG|07:44:37] Executing function 'RunPythonScript'"},

{'type': 'esriJobMessageTypeInformative',

'description': '[DEBUG|07:44:37] Client supplied query filter: fields=*;where=;extent=;interval=;spatial-filters='},

{'type': 'esriJobMessageTypeInformative',

'description': 'Using URL based GPRecordSet param: https://pythonapi.playground.esri.com/ga/rest/services/DataStoreCatalogs/bigDataFileShares_GA_Data/BigDataCatalogServer/air_quality'},

{'type': 'esriJobMessageTypeInformative',

'description': '[DEBUG|07:44:37] Trying handler: com.esri.arcgis.gae.ags.layer.input.ShortCircuitFSLayerInputHandler$@299fb73e'},

{'type': 'esriJobMessageTypeInformative',

'description': '[DEBUG|07:44:37] Trying handler: com.esri.arcgis.gae.ags.layer.input.HostedFSLayerInputHandler$@4be79210'},

{'type': 'esriJobMessageTypeInformative',

'description': '[DEBUG|07:44:37] Trying handler: com.esri.arcgis.gae.ags.layer.input.RemoteFSLayerInputHandler$@4d7c1897'},

{'type': 'esriJobMessageTypeInformative',

'description': '[DEBUG|07:44:37] Trying handler: com.esri.arcgis.gae.ags.layer.input.BigDataFileShareLayerInputHandler$@c0e37d4'},

{'type': 'esriJobMessageTypeInformative',

'description': '[DEBUG|07:44:37] Detected catalog server path (share=bigDataFileShares_GA_Data,dataset=air_quality)'},

{'type': 'esriJobMessageTypeInformative',

'description': '[DEBUG|07:44:37] Generating new token to access the hosting server from this federated server'},

{'type': 'esriJobMessageTypeInformative',

'description': '[DEBUG|07:44:37] Found data store ID: 0e7a861d-c1c5-4acc-869d-05d2cebbdbee'},

{'type': 'esriJobMessageTypeInformative',

'description': '[DEBUG|07:44:37] Using input handler: BigDataFileShareLayerInputHandler'},

{'type': 'esriJobMessageTypeInformative',

'description': '[DEBUG|07:44:37] Loading data store factory'},

{'type': 'esriJobMessageTypeInformative',

'description': "[DEBUG|07:44:37] Attempting to load data store factory for '/bigDataFileShares/GA_Data' of type 'fileShare'"},

{'type': 'esriJobMessageTypeInformative',

'description': '[DEBUG|07:44:37] Loading data store using [com.esri.arcgis.gae.ags.datastore.manifest.ManifestDataStoreFactory]'},

{'type': 'esriJobMessageTypeInformative',

'description': "[DEBUG|07:44:37] Initializing file system 'file'"},

{'type': 'esriJobMessageTypeInformative',

'description': "[DEBUG|07:44:38] Initialized with qualified path 'file-eb43045c-bffa-4bba-aac4-756a210ffa7b:/mnt/172.31.22.189/gisdata/geoanalytics/samples'"},

{'type': 'esriJobMessageTypeInformative',

'description': "[DEBUG|07:44:38] Loading dataset 'air_quality' from datasource FileSystemDataSource"},

{'type': 'esriJobMessageTypeInformative',

'description': "[DEBUG|07:44:38] Loading file system dataset with path 'file-eb43045c-bffa-4bba-aac4-756a210ffa7b:/mnt/172.31.22.189/gisdata/geoanalytics/samples/air_quality'"},

{'type': 'esriJobMessageTypeInformative',

'description': '[DEBUG|07:44:39] [Spark] Executor added (host=172.31.22.24,cores=6)'},

{'type': 'esriJobMessageTypeInformative',

'description': '[DEBUG|07:44:39] Input layer has allocated 1147 read task(s)'},

{'type': 'esriJobMessageTypeInformative',

'description': '[DEBUG|07:44:39] Input layer known upper bounds (countEstimate=0,spatialExtent=N/A,temporalExtent=N/A)'},

{'type': 'esriJobMessageTypeInformative',

'description': '[DEBUG|07:44:39] Found 2 pieces of metadata: DatasetSourceMetadata,ServiceLayerMetadata'},

{'type': 'esriJobMessageTypeInformative',

'description': '[DEBUG|07:44:39] Client supplied query filter: fields=*;where=;extent=;interval=;spatial-filters='},

{'type': 'esriJobMessageTypeInformative',

'description': 'Using URL based GPRecordSet param: https://pythonapi.playground.esri.com/server/rest/services/Hosted/usaCounties/FeatureServer/0'},

{'type': 'esriJobMessageTypeInformative',

'description': '[DEBUG|07:44:39] Trying handler: com.esri.arcgis.gae.ags.layer.input.ShortCircuitFSLayerInputHandler$@299fb73e'},

{'type': 'esriJobMessageTypeInformative',

'description': '[ERROR|07:44:49] Failed to connect to managed data store: The connection attempt failed.'},

{'type': 'esriJobMessageTypeInformative',

'description': '[DEBUG|07:44:49] Failed to connect to managed data store: The connection attempt failed.'},

{'type': 'esriJobMessageTypeInformative',

'description': 'The connection attempt failed.'},

{'type': 'esriJobMessageTypeInformative',

'description': '[DEBUG]org.postgresql.util.PSQLException: The connection attempt failed.\n\tat org.postgresql.core.v3.ConnectionFactoryImpl.openConnectionImpl(ConnectionFactoryImpl.java:292)\n\tat org.postgresql.core.ConnectionFactory.openConnection(ConnectionFactory.java:49)\n\tat org.postgresql.jdbc.PgConnection.<init>(PgConnection.java:195)\n\tat org.postgresql.Driver.makeConnection(Driver.java:454)\n\tat org.postgresql.Driver.connect(Driver.java:256)\n\tat java.sql.DriverManager.getConnection(DriverManager.java:664)\n\tat java.sql.DriverManager.getConnection(DriverManager.java:247)\n\tat com.esri.arcgis.gae.gp.ags.RelationalDataStore.testJdbcConnection(RelationalDataStore.scala:48)\n\tat com.esri.arcgis.gae.gp.ags.RelationalDataStore.<init>(RelationalDataStore.scala:41)\n\tat com.esri.arcgis.gae.gp.ags.RelationalDataStore$Provider.loadDataStore(RelationalDataStore.scala:180)\n\tat com.esri.arcgis.gae.gp.ags.RelationalDataStore$Provider.loadDataStore(RelationalDataStore.scala:172)\n\tat com.esri.arcgis.gae.ags.datastore.managed.ManagedDataStore$$anonfun$apply$3.apply(ManagedDataStore.scala:115)\n\tat com.esri.arcgis.gae.ags.datastore.managed.ManagedDataStore$$anonfun$apply$3.apply(ManagedDataStore.scala:115)\n\tat scala.util.Try$.apply(Try.scala:192)\n\tat com.esri.arcgis.gae.ags.datastore.managed.ManagedDataStore$.apply(ManagedDataStore.scala:114)\n\tat com.esri.arcgis.gae.ags.datastore.managed.ManagedDataStore$$anonfun$apply$1.apply(ManagedDataStore.scala:108)\n\tat com.esri.arcgis.gae.ags.datastore.managed.ManagedDataStore$$anonfun$apply$1.apply(ManagedDataStore.scala:108)\n\tat scala.Option.map(Option.scala:146)\n\tat com.esri.arcgis.gae.ags.datastore.managed.ManagedDataStore$.apply(ManagedDataStore.scala:108)\n\tat com.esri.arcgis.gae.gp.ags.EnterpriseWebGISContext$managedDataStores$.getWithServiceInfoName(EnterpriseWebGISContext.scala:47)\n\tat com.esri.arcgis.gae.ags.layer.input.ShortCircuitFSLayerInputHandler$.tryGet(ShortCircuitFSLayerInputHandler.scala:150)\n\tat com.esri.arcgis.gae.ags.layer.input.GPLayerInputHandler$$anonfun$apply$7$$anonfun$9.apply(GPLayerInputHandler.scala:199)\n\tat com.esri.arcgis.gae.ags.layer.input.GPLayerInputHandler$$anonfun$apply$7$$anonfun$9.apply(GPLayerInputHandler.scala:199)\n\tat scala.util.Try$.apply(Try.scala:192)\n\tat com.esri.arcgis.gae.ags.layer.input.GPLayerInputHandler$$anonfun$apply$7.apply(GPLayerInputHandler.scala:199)\n\tat com.esri.arcgis.gae.ags.layer.input.GPLayerInputHandler$$anonfun$apply$7.apply(GPLayerInputHandler.scala:197)\n\tat scala.collection.TraversableLike$$anonfun$map$1.apply(TraversableLike.scala:234)\n\tat scala.collection.TraversableLike$$anonfun$map$1.apply(TraversableLike.scala:234)\n\tat scala.collection.immutable.List.foreach(List.scala:392)\n\tat scala.collection.TraversableLike$class.map(TraversableLike.scala:234)\n\tat scala.collection.immutable.List.map(List.scala:296)\n\tat com.esri.arcgis.gae.ags.layer.input.GPLayerInputHandler$.apply(GPLayerInputHandler.scala:197)\n\tat com.esri.arcgis.gae.ags.layer.input.GPLayerInputHandler$.read(GPLayerInputHandler.scala:138)\n\tat com.esri.arcgis.gae.gp.adapt.param.LayerInputConverter.read(LayerInputConverter.scala:82)\n\tat com.esri.arcgis.gae.gp.adapt.param.MultiLayerInputConverter$$anonfun$1.apply(MultiLayerInputConverter.scala:21)\n\tat com.esri.arcgis.gae.gp.adapt.param.MultiLayerInputConverter$$anonfun$1.apply(MultiLayerInputConverter.scala:21)\n\tat scala.collection.TraversableLike$$anonfun$flatMap$1.apply(TraversableLike.scala:241)\n\tat scala.collection.TraversableLike$$anonfun$flatMap$1.apply(TraversableLike.scala:241)\n\tat scala.collection.mutable.ResizableArray$class.foreach(ResizableArray.scala:59)\n\tat scala.collection.mutable.ArrayBuffer.foreach(ArrayBuffer.scala:48)\n\tat scala.collection.TraversableLike$class.flatMap(TraversableLike.scala:241)\n\tat scala.collection.AbstractTraversable.flatMap(Traversable.scala:104)\n\tat com.esri.arcgis.gae.gp.adapt.param.MultiLayerInputConverter.read(MultiLayerInputConverter.scala:21)\n\tat com.esri.arcgis.gae.gp.adapt.param.GPParameterAdapter$$anon$1.readParam(GPParameterAdapter.scala:36)\n\tat com.esri.arcgis.gae.fn.api.v1.GAFunctionArgs$$anonfun$1.applyOrElse(GAFunctionArgs.scala:12)\n\tat com.esri.arcgis.gae.fn.api.v1.GAFunctionArgs$$anonfun$1.applyOrElse(GAFunctionArgs.scala:11)\n\tat scala.PartialFunction$$anonfun$runWith$1.apply(PartialFunction.scala:141)\n\tat scala.PartialFunction$$anonfun$runWith$1.apply(PartialFunction.scala:140)\n\tat scala.collection.immutable.List.foreach(List.scala:392)\n\tat scala.collection.TraversableLike$class.collect(TraversableLike.scala:271)\n\tat scala.collection.immutable.List.collect(List.scala:326)\n\tat com.esri.arcgis.gae.fn.api.v1.GAFunctionArgs.<init>(GAFunctionArgs.scala:11)\n\tat com.esri.arcgis.gae.fn.api.v1.GAFunctionArgs$.apply(GAFunctionArgs.scala:58)\n\tat com.esri.arcgis.gae.fn.api.v1.GAFunction$class.executeFunction(GAFunction.scala:20)\n\tat com.esri.arcgis.gae.gp.fn.FnRunPythonScript$.executeFunction(FnRunPythonScript.scala:10)\n\tat com.esri.arcgis.gae.gp.GPToGAFunctionAdapter$$anonfun$execute$1.apply$mcV$sp(GPToGAFunctionAdapter.scala:152)\n\tat com.esri.arcgis.gae.gp.GPToGAFunctionAdapter$$anonfun$execute$1.apply(GPToGAFunctionAdapter.scala:152)\n\tat com.esri.arcgis.gae.gp.GPToGAFunctionAdapter$$anonfun$execute$1.apply(GPToGAFunctionAdapter.scala:152)\n\tat com.esri.arcgis.st.util.ScopeManager.withScope(ScopeManager.scala:20)\n\tat com.esri.arcgis.st.spark.ExecutionContext$class.withScope(ExecutionContext.scala:23)\n\tat com.esri.arcgis.st.spark.GenericExecutionContext.withScope(ExecutionContext.scala:52)\n\tat com.esri.arcgis.gae.gp.GPToGAFunctionAdapter.execute(GPToGAFunctionAdapter.scala:151)\n\tat sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)\n\tat sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)\n\tat sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)\n\tat java.lang.reflect.Method.invoke(Method.java:498)\n\tat com.esri.arcgis.interop.NativeObjRef.nativeVtblInvokeNative(Native Method)\n\tat com.esri.arcgis.interop.NativeObjRef.nativeVtblInvoke(Unknown Source)\n\tat com.esri.arcgis.interop.NativeObjRef.invoke(Unknown Source)\n\tat com.esri.arcgis.interop.Dispatch.vtblInvoke(Unknown Source)\n\tat com.esri.arcgis.system.IRequestHandlerProxy.handleStringRequest(Unknown Source)\n\tat com.esri.arcgis.discovery.servicelib.impl.SOThreadBase.handleSoapRequest(SOThreadBase.java:574)\n\tat com.esri.arcgis.discovery.servicelib.impl.DedicatedSOThread.handleRequest(DedicatedSOThread.java:310)\n\tat com.esri.arcgis.discovery.servicelib.impl.SOThreadBase.startRun(SOThreadBase.java:430)\n\tat com.esri.arcgis.discovery.servicelib.impl.DedicatedSOThread.run(DedicatedSOThread.java:166)\nCaused by: java.net.SocketTimeoutException: connect timed out\n\tat java.net.PlainSocketImpl.socketConnect(Native Method)\n\tat java.net.AbstractPlainSocketImpl.doConnect(AbstractPlainSocketImpl.java:350)\n\tat java.net.AbstractPlainSocketImpl.connectToAddress(AbstractPlainSocketImpl.java:206)\n\tat java.net.AbstractPlainSocketImpl.connect(AbstractPlainSocketImpl.java:188)\n\tat java.net.SocksSocketImpl.connect(SocksSocketImpl.java:392)\n\tat java.net.Socket.connect(Socket.java:607)\n\tat org.postgresql.core.PGStream.<init>(PGStream.java:70)\n\tat org.postgresql.core.v3.ConnectionFactoryImpl.tryConnect(ConnectionFactoryImpl.java:91)\n\tat org.postgresql.core.v3.ConnectionFactoryImpl.openConnectionImpl(ConnectionFactoryImpl.java:192)\n\t... 74 more\n'},

{'type': 'esriJobMessageTypeInformative',

'description': '[DEBUG|07:44:49] Trying handler: com.esri.arcgis.gae.ags.layer.input.HostedFSLayerInputHandler$@4be79210'},

{'type': 'esriJobMessageTypeInformative',

'description': '[DEBUG|07:44:49] Trying handler: com.esri.arcgis.gae.ags.layer.input.RemoteFSLayerInputHandler$@4d7c1897'},

{'type': 'esriJobMessageTypeInformative',

'description': '[DEBUG|07:44:49] Trying handler: com.esri.arcgis.gae.ags.layer.input.BigDataFileShareLayerInputHandler$@c0e37d4'},

{'type': 'esriJobMessageTypeInformative',

'description': '[DEBUG|07:44:49] Using input handler: HostedFSLayerInputHandler'},

{'type': 'esriJobMessageTypeInformative',

'description': '[DEBUG|07:44:50] Input layer has allocated 6 read task(s)'},

{'type': 'esriJobMessageTypeInformative',

'description': '[DEBUG|07:44:50] Input layer known upper bounds (countEstimate=3220,spatialExtent={"xmin":-19942591.999999996,"ymin":2023652.7970000058,"xmax":20012848.000000007,"ymax":11537127.5},temporalExtent=N/A)'},

{'type': 'esriJobMessageTypeInformative',

'description': '[DEBUG|07:44:50] Found 1 pieces of metadata: ServiceLayerMetadata'},

{'type': 'esriJobMessageTypeInformative',

'description': '[DEBUG|07:44:50] Validating function inputs'},

{'type': 'esriJobMessageTypeInformative',

'description': "[DEBUG|07:44:52] Found python executable at '/home/arcgis/anaconda3/envs/ga2/bin/python'"},

{'type': 'esriJobMessageTypeInformative',

'description': '[DEBUG|07:44:53] Initializing SparkSession'},

{'type': 'esriJobMessageTypeInformative',

'description': '[DEBUG|07:44:53] Running user script (/tmp/ga-python-runner4272143704664056645/script.py)'},

{'type': 'esriJobMessageTypeInformative',

'description': "[DEBUG|07:44:53] Parameter 'targetLayer' set to 'LayerInput(WrappedFeatureSchemaRDD[13] at RDD at FeatureRDD.scala:37,ArrayBuffer())' (python input=[fid: bigint, state_name: string ... 6 more fields])"},

{'type': 'esriJobMessageTypeInformative',

'description': "[DEBUG|07:44:53] Parameter 'joinLayer' set to 'LayerInput(WrappedFeatureSchemaRDD[20] at RDD at FeatureRDD.scala:37,ArrayBuffer())' (python input=[State Code: bigint, County Code: bigint ... 24 more fields])"},

{'type': 'esriJobMessageTypeInformative',

'description': "[DEBUG|07:44:53] Parameter 'joinOperation' set to 'JoinOneToOne' (python input=JoinOneToOne)"},

{'type': 'esriJobMessageTypeInformative',

'description': "[DEBUG|07:44:53] Parameter 'summaryFields' set to 'ArrayBuffer(SummaryStatistic(Mean,Sample Measurement,None,None))' (python input=[{statisticType=mean, onStatisticField=Sample Measurement}])"},

{'type': 'esriJobMessageTypeInformative',

'description': "[DEBUG|07:44:53] Parameter 'spatialRelationship' set to 'Contains' (python input=Contains)"},

{'type': 'esriJobMessageTypeInformative',

'description': "[DEBUG|07:44:53] Executing function 'JoinFeatures'"},

{'type': 'esriJobMessageTypeInformative',

'description': '[DEBUG|07:44:53] Validating function inputs'},

{'type': 'esriJobMessageTypeInformative',

'description': '[DEBUG|07:44:53] BinJoin(target, join, FeatureRelationship(Some(SpatialXYRelationship(Contains)),None,List()), JoinParams(None,None,None,None,QuadtreeParams(None,None,None,None,0),20000,Default,None,None,None))'},

{'type': 'esriJobMessageTypeInformative',

'description': '[DEBUG|07:44:54] preAggr=true; filterByExtent=false; useQuadMesh=true; cellSide=-9.9; joinParams = JoinParams(None,None,None,None,QuadtreeParams(None,None,None,None,0),20000,Default,Some(enumMedium),None,Some(0))'},

{'type': 'esriJobMessageTypeInformative',

'description': '{"messageCode":"BD_101028","message":"Starting new distributed job with 3441 tasks.","params":{"totalTasks":"3441"}}'},

{'type': 'esriJobMessageTypeInformative',

'description': '[DEBUG|07:44:54] [Spark] Job has 3 stages'},

{'type': 'esriJobMessageTypeInformative',

'description': "[DEBUG|07:44:54] [Spark] Submitted stage 'RDD at BinnedRDD.scala:15' with 1147 tasks (attempt=0)"},

{'type': 'esriJobMessageTypeInformative',

'description': '{"messageCode":"BD_101029","message":"0/3441 distributed tasks completed.","params":{"completedTasks":"0","totalTasks":"3441"}}'},

{'type': 'esriJobMessageTypeInformative',

'description': '[DEBUG|07:44:54] [Spark] Job has accepted first task and is no longer pending'},

{'type': 'esriJobMessageTypeInformative',

'description': '{"messageCode":"BD_101029","message":"2/3441 distributed tasks completed.","params":{"completedTasks":"2","totalTasks":"3441"}}'},

{'type': 'esriJobMessageTypeInformative',

'description': '{"messageCode":"BD_101029","message":"23/3441 distributed tasks completed.","params":{"completedTasks":"23","totalTasks":"3441"}}'},

{'type': 'esriJobMessageTypeInformative',

'description': '{"messageCode":"BD_101029","message":"44/3441 distributed tasks completed.","params":{"completedTasks":"44","totalTasks":"3441"}}'},

{'type': 'esriJobMessageTypeInformative',

'description': '{"messageCode":"BD_101029","message":"67/3441 distributed tasks completed.","params":{"completedTasks":"67","totalTasks":"3441"}}'},

{'type': 'esriJobMessageTypeInformative',

'description': '{"messageCode":"BD_101029","message":"88/3441 distributed tasks completed.","params":{"completedTasks":"88","totalTasks":"3441"}}'},

{'type': 'esriJobMessageTypeInformative',

'description': '{"messageCode":"BD_101029","message":"109/3441 distributed tasks completed.","params":{"completedTasks":"109","totalTasks":"3441"}}'},

{'type': 'esriJobMessageTypeInformative',

'description': '{"messageCode":"BD_101029","message":"129/3441 distributed tasks completed.","params":{"completedTasks":"129","totalTasks":"3441"}}'},

{'type': 'esriJobMessageTypeInformative',

'description': '{"messageCode":"BD_101029","message":"150/3441 distributed tasks completed.","params":{"completedTasks":"150","totalTasks":"3441"}}'},

{'type': 'esriJobMessageTypeInformative',

'description': '{"messageCode":"BD_101029","message":"170/3441 distributed tasks completed.","params":{"completedTasks":"170","totalTasks":"3441"}}'},

{'type': 'esriJobMessageTypeInformative',

'description': '{"messageCode":"BD_101029","message":"193/3441 distributed tasks completed.","params":{"completedTasks":"193","totalTasks":"3441"}}'},

{'type': 'esriJobMessageTypeInformative',

'description': '{"messageCode":"BD_101029","message":"213/3441 distributed tasks completed.","params":{"completedTasks":"213","totalTasks":"3441"}}'},

{'type': 'esriJobMessageTypeInformative',

'description': '{"messageCode":"BD_101029","message":"234/3441 distributed tasks completed.","params":{"completedTasks":"234","totalTasks":"3441"}}'},

{'type': 'esriJobMessageTypeInformative',

'description': '{"messageCode":"BD_101029","message":"254/3441 distributed tasks completed.","params":{"completedTasks":"254","totalTasks":"3441"}}'},

{'type': 'esriJobMessageTypeInformative',

'description': '{"messageCode":"BD_101029","message":"276/3441 distributed tasks completed.","params":{"completedTasks":"276","totalTasks":"3441"}}'},

{'type': 'esriJobMessageTypeInformative',

'description': '{"messageCode":"BD_101029","message":"299/3441 distributed tasks completed.","params":{"completedTasks":"299","totalTasks":"3441"}}'},

{'type': 'esriJobMessageTypeInformative',

'description': '{"messageCode":"BD_101029","message":"321/3441 distributed tasks completed.","params":{"completedTasks":"321","totalTasks":"3441"}}'},

{'type': 'esriJobMessageTypeInformative',

'description': '{"messageCode":"BD_101029","message":"344/3441 distributed tasks completed.","params":{"completedTasks":"344","totalTasks":"3441"}}'},

{'type': 'esriJobMessageTypeInformative',

'description': '{"messageCode":"BD_101029","message":"366/3441 distributed tasks completed.","params":{"completedTasks":"366","totalTasks":"3441"}}'},

{'type': 'esriJobMessageTypeInformative',

'description': '{"messageCode":"BD_101029","message":"390/3441 distributed tasks completed.","params":{"completedTasks":"390","totalTasks":"3441"}}'},

{'type': 'esriJobMessageTypeInformative',

'description': '{"messageCode":"BD_101029","message":"411/3441 distributed tasks completed.","params":{"completedTasks":"411","totalTasks":"3441"}}'},

{'type': 'esriJobMessageTypeInformative',

'description': '{"messageCode":"BD_101029","message":"432/3441 distributed tasks completed.","params":{"completedTasks":"432","totalTasks":"3441"}}'},

{'type': 'esriJobMessageTypeInformative',

'description': '{"messageCode":"BD_101029","message":"453/3441 distributed tasks completed.","params":{"completedTasks":"453","totalTasks":"3441"}}'},

{'type': 'esriJobMessageTypeInformative',

'description': '{"messageCode":"BD_101029","message":"475/3441 distributed tasks completed.","params":{"completedTasks":"475","totalTasks":"3441"}}'},

{'type': 'esriJobMessageTypeInformative',

'description': '{"messageCode":"BD_101029","message":"498/3441 distributed tasks completed.","params":{"completedTasks":"498","totalTasks":"3441"}}'},

{'type': 'esriJobMessageTypeInformative',

'description': '{"messageCode":"BD_101029","message":"519/3441 distributed tasks completed.","params":{"completedTasks":"519","totalTasks":"3441"}}'},

{'type': 'esriJobMessageTypeInformative',

'description': '{"messageCode":"BD_101029","message":"542/3441 distributed tasks completed.","params":{"completedTasks":"542","totalTasks":"3441"}}'},

{'type': 'esriJobMessageTypeInformative',

'description': '{"messageCode":"BD_101029","message":"566/3441 distributed tasks completed.","params":{"completedTasks":"566","totalTasks":"3441"}}'},

{'type': 'esriJobMessageTypeInformative',

'description': '{"messageCode":"BD_101029","message":"590/3441 distributed tasks completed.","params":{"completedTasks":"590","totalTasks":"3441"}}'},

{'type': 'esriJobMessageTypeInformative',

'description': '{"messageCode":"BD_101029","message":"607/3441 distributed tasks completed.","params":{"completedTasks":"607","totalTasks":"3441"}}'},

{'type': 'esriJobMessageTypeInformative',

'description': '{"messageCode":"BD_101029","message":"622/3441 distributed tasks completed.","params":{"completedTasks":"622","totalTasks":"3441"}}'},

{'type': 'esriJobMessageTypeInformative',

'description': '{"messageCode":"BD_101029","message":"636/3441 distributed tasks completed.","params":{"completedTasks":"636","totalTasks":"3441"}}'},

{'type': 'esriJobMessageTypeInformative',

'description': '{"messageCode":"BD_101029","message":"658/3441 distributed tasks completed.","params":{"completedTasks":"658","totalTasks":"3441"}}'},

{'type': 'esriJobMessageTypeInformative',

'description': '{"messageCode":"BD_101029","message":"679/3441 distributed tasks completed.","params":{"completedTasks":"679","totalTasks":"3441"}}'},

{'type': 'esriJobMessageTypeInformative',

'description': '{"messageCode":"BD_101029","message":"701/3441 distributed tasks completed.","params":{"completedTasks":"701","totalTasks":"3441"}}'},

{'type': 'esriJobMessageTypeInformative',

'description': '{"messageCode":"BD_101029","message":"722/3441 distributed tasks completed.","params":{"completedTasks":"722","totalTasks":"3441"}}'},

{'type': 'esriJobMessageTypeInformative',

'description': '{"messageCode":"BD_101029","message":"743/3441 distributed tasks completed.","params":{"completedTasks":"743","totalTasks":"3441"}}'},

{'type': 'esriJobMessageTypeInformative',

'description': '{"messageCode":"BD_101029","message":"765/3441 distributed tasks completed.","params":{"completedTasks":"765","totalTasks":"3441"}}'},

{'type': 'esriJobMessageTypeInformative',

'description': '{"messageCode":"BD_101029","message":"786/3441 distributed tasks completed.","params":{"completedTasks":"786","totalTasks":"3441"}}'},

{'type': 'esriJobMessageTypeInformative',

'description': '{"messageCode":"BD_101029","message":"807/3441 distributed tasks completed.","params":{"completedTasks":"807","totalTasks":"3441"}}'},

{'type': 'esriJobMessageTypeInformative',

'description': '{"messageCode":"BD_101029","message":"828/3441 distributed tasks completed.","params":{"completedTasks":"828","totalTasks":"3441"}}'},

{'type': 'esriJobMessageTypeInformative',

'description': '{"messageCode":"BD_101029","message":"848/3441 distributed tasks completed.","params":{"completedTasks":"848","totalTasks":"3441"}}'},

{'type': 'esriJobMessageTypeInformative',

'description': '{"messageCode":"BD_101029","message":"869/3441 distributed tasks completed.","params":{"completedTasks":"869","totalTasks":"3441"}}'},

{'type': 'esriJobMessageTypeInformative',

'description': '{"messageCode":"BD_101029","message":"891/3441 distributed tasks completed.","params":{"completedTasks":"891","totalTasks":"3441"}}'},

{'type': 'esriJobMessageTypeInformative',

'description': '{"messageCode":"BD_101029","message":"911/3441 distributed tasks completed.","params":{"completedTasks":"911","totalTasks":"3441"}}'},

{'type': 'esriJobMessageTypeInformative',

'description': '{"messageCode":"BD_101029","message":"932/3441 distributed tasks completed.","params":{"completedTasks":"932","totalTasks":"3441"}}'},

{'type': 'esriJobMessageTypeInformative',

'description': '{"messageCode":"BD_101029","message":"954/3441 distributed tasks completed.","params":{"completedTasks":"954","totalTasks":"3441"}}'},

{'type': 'esriJobMessageTypeInformative',

'description': '{"messageCode":"BD_101029","message":"977/3441 distributed tasks completed.","params":{"completedTasks":"977","totalTasks":"3441"}}'},

{'type': 'esriJobMessageTypeInformative',

'description': '{"messageCode":"BD_101029","message":"999/3441 distributed tasks completed.","params":{"completedTasks":"999","totalTasks":"3441"}}'},

{'type': 'esriJobMessageTypeInformative',

'description': '{"messageCode":"BD_101029","message":"1019/3441 distributed tasks completed.","params":{"completedTasks":"1019","totalTasks":"3441"}}'},

{'type': 'esriJobMessageTypeInformative',

'description': '{"messageCode":"BD_101029","message":"1041/3441 distributed tasks completed.","params":{"completedTasks":"1041","totalTasks":"3441"}}'},

{'type': 'esriJobMessageTypeInformative',

'description': '{"messageCode":"BD_101029","message":"1062/3441 distributed tasks completed.","params":{"completedTasks":"1062","totalTasks":"3441"}}'},

{'type': 'esriJobMessageTypeInformative',

'description': '{"messageCode":"BD_101029","message":"1081/3441 distributed tasks completed.","params":{"completedTasks":"1081","totalTasks":"3441"}}'},

{'type': 'esriJobMessageTypeInformative',

'description': '{"messageCode":"BD_101029","message":"1104/3441 distributed tasks completed.","params":{"completedTasks":"1104","totalTasks":"3441"}}'},

{'type': 'esriJobMessageTypeInformative',

'description': '{"messageCode":"BD_101029","message":"1125/3441 distributed tasks completed.","params":{"completedTasks":"1125","totalTasks":"3441"}}'},

{'type': 'esriJobMessageTypeInformative',

'description': "[DEBUG|07:49:41] [Spark] Completed stage 'RDD at BinnedRDD.scala:15' (attempt=0,gc-time=6709,disk-spill=0b,cpu-time=1660433228599ns)"},

{'type': 'esriJobMessageTypeInformative',

'description': "[DEBUG|07:49:41] [Spark] Submitted stage 'keyBy at FeatureMetrics.scala:18' with 1147 tasks (attempt=0)"},

{'type': 'esriJobMessageTypeInformative',

'description': '{"messageCode":"BD_101029","message":"1324/3441 distributed tasks completed.","params":{"completedTasks":"1324","totalTasks":"3441"}}'},

{'type': 'esriJobMessageTypeInformative',

'description': "[DEBUG|07:49:44] [Spark] Completed stage 'keyBy at FeatureMetrics.scala:18' (attempt=0,gc-time=50,disk-spill=0b,cpu-time=4804434050ns)"},

{'type': 'esriJobMessageTypeInformative',

'description': "[DEBUG|07:49:44] [Spark] Submitted stage 'collect at FeatureMetrics.scala:18' with 1147 tasks (attempt=0)"},

{'type': 'esriJobMessageTypeInformative',

'description': '{"messageCode":"BD_101029","message":"3441/3441 distributed tasks completed.","params":{"completedTasks":"3441","totalTasks":"3441"}}'},

{'type': 'esriJobMessageTypeInformative',

'description': "[DEBUG|07:49:46] [Spark] Completed stage 'collect at FeatureMetrics.scala:18' (attempt=0,gc-time=0,disk-spill=0b,cpu-time=2106058490ns)"},

{'type': 'esriJobMessageTypeInformative',

'description': '{"messageCode":"BD_101028","message":"Starting new distributed job with 3441 tasks.","params":{"totalTasks":"3441"}}'},

{'type': 'esriJobMessageTypeInformative',

'description': '[DEBUG|07:49:46] [Spark] Job has 3 stages'},

{'type': 'esriJobMessageTypeInformative',

'description': "[DEBUG|07:49:46] [Spark] Submitted stage 'flatMap at QuadtreeMesh.scala:146' with 1147 tasks (attempt=0)"},

{'type': 'esriJobMessageTypeInformative',

'description': "[DEBUG|07:49:48] [Spark] Completed stage 'flatMap at QuadtreeMesh.scala:146' (attempt=0,gc-time=101,disk-spill=0b,cpu-time=4875384599ns)"},

{'type': 'esriJobMessageTypeInformative',

'description': "[DEBUG|07:49:48] [Spark] Submitted stage 'collect at QuadtreeMesh.scala:158' with 1147 tasks (attempt=0)"},

{'type': 'esriJobMessageTypeInformative',

'description': "[DEBUG|07:49:49] [Spark] Completed stage 'collect at QuadtreeMesh.scala:158' (attempt=0,gc-time=0,disk-spill=0b,cpu-time=1250333332ns)"},

{'type': 'esriJobMessageTypeInformative',

'description': '[DEBUG|07:49:49] 312 quadrants (1147 partitions) - (-1.1300965787500001E7,3261450.1495,-1.13009336355E7,3261465.0365000004) - QuadtreeMeta(20,6,16384,Point) - actual depth: 6'},

{'type': 'esriJobMessageTypeInformative',

'description': "[DEBUG|07:49:50] Using cached webgis with id '21478e22-9d60-4863-926c-c109c6ddb680'"},

{'type': 'esriJobMessageTypeInformative',

'description': '[DEBUG|07:49:50] Creating portal feature service item'},

{'type': 'esriJobMessageTypeInformative',

'description': '[DEBUG|07:49:54] Portal item created (id=81611d0a31a346c8bd641e4b1a4a514b)'},

{'type': 'esriJobMessageTypeInformative',

'description': '[DEBUG|07:49:54] Adding result layer'},

{'type': 'esriJobMessageTypeInformative',

'description': '[DEBUG|07:49:54] (5735) WrappedFeatureSchemaRDD[54] at RDD at FeatureRDD.scala:37 []\n | GenericFeatureSchemaRDD[53] at RDD at GenericFeatureSchemaRDD.scala:14 []\n | MapPartitionsRDD[52] at map at AdaptUtil.scala:150 []\n | MapPartitionsRDD[51] at rdd at AdaptUtil.scala:150 []\n | MapPartitionsRDD[50] at rdd at AdaptUtil.scala:150 []\n | MapPartitionsRDD[49] at rdd at AdaptUtil.scala:150 []\n | MapPartitionsRDD[48] at createDataFrame at AdaptUtil.scala:110 []\n | MapPartitionsRDD[47] at map at AdaptUtil.scala:88 []\n | GenericFeatureSchemaRDD[45] at RDD at GenericFeatureSchemaRDD.scala:14 []\n | MapPartitionsRDD[44] at map at SummarySpatialJoin.scala:216 []\n | MapPartitionsRDD[43] at filter at SummarySpatialJoin.scala:215 []\n | ShuffledRDD[42] at combineByKey at SummarySpatialJoin.scala:211 []\n +-(5735) MapPartitionsRDD[41] at flatMap at SummarySpatialJoin.scala:199 []\n | MapPartitionsRDD[40] at cogroup at SummarySpatialJoin.scala:197 []\n | CoGroupedRDD[39] at cogroup at SummarySpatialJoin.scala:197 []\n +-(6) MapPartitionsRDD[38] at flatMap at SummarySpatialJoin.scala:190 []\n | | GenericFeatureSchemaRDD[37] at RDD at GenericFeatureSchemaRDD.scala:14 []\n | | MapPartitionsRDD[36] at mapPartitionsWithIndex at package.scala:34 []\n | | WrappedFeatureSchemaRDD[13] at RDD at FeatureRDD.scala:37 []\n | | GenericFeatureSchemaRDD[12] at RDD at GenericFeatureSchemaRDD.scala:14 []\n | | MapPartitionsRDD[11] at map at AdaptUtil.scala:150 []\n | | MapPartitionsRDD[10] at rdd at AdaptUtil.scala:150 []\n | | MapPartitionsRDD[9] at rdd at AdaptUtil.scala:150 []\n | | MapPartitionsRDD[8] at rdd at AdaptUtil.scala:150 []\n | | MapPartitionsRDD[7] at createDataFrame at AdaptUtil.scala:110 []\n | | MapPartitionsRDD[6] at map at AdaptUtil.scala:88 []\n | | FeatureServiceLayerRDD[3] at RDD at FeatureRDD.scala:37 []\n +-(1147) MapPartitionsRDD[35] at flatMap at SummarySpatialJoin.scala:140 []\n | GenericFeatureSchemaRDD[26] at RDD at GenericFeatureSchemaRDD.scala:14 []\n | MapPartitionsRDD[25] at map at BinnedRDD.scala:58 []\n | ShuffledRDD[24] at combineByKey at BinnedRDD.scala:57 []\n +-(1147) BinnedRDD[23] at RDD at BinnedRDD.scala:15 []\n | (OperatorSelectFields) BatchFeatureOperatorRDD[22] at RDD at BatchFeatureOperatorRDD.scala:17 []\n | (OperatorProject) BatchFeatureOperatorRDD[21] at RDD at BatchFeatureOperatorRDD.scala:17 []\n | WrappedFeatureSchemaRDD[20] at RDD at FeatureRDD.scala:37 []\n | GenericFeatureSchemaRDD[19] at RDD at GenericFeatureSchemaRDD.scala:14 []\n | MapPartitionsRDD[18] at map at AdaptUtil.scala:150 []\n | MapPartitionsRDD[17] at rdd at AdaptUtil.scala:150 []\n | MapPartitionsRDD[16] at rdd at AdaptUtil.scala:150 []\n | MapPartitionsRDD[15] at rdd at AdaptUtil.scala:150 []\n | MapPartitionsRDD[14] at rdd at AdaptUtil.scala:150 []\n | MapPartitionsRDD[5] at createDataFrame at AdaptUtil.scala:110 []\n | MapPartitionsRDD[4] at map at AdaptUtil.scala:88 []\n | WrappedFeatureSchemaRDD[2] at RDD at FeatureRDD.scala:37 []\n | (OperatorDecodeSpecialFields) BatchFeatureOperatorRDD[1] at RDD at BatchFeatureOperatorRDD.scala:17 []\n | DelimitedFileRDD[0] at RDD at DelimitedFileRDD.scala:39 []'},

{'type': 'esriJobMessageTypeInformative',

'description': "[DEBUG|07:49:54] Rewriting field names to match data store naming rules ('SHAPE__Length' -> 'SHAPE__Length1', 'SHAPE__Area' -> 'SHAPE__Area1', 'MEAN_Sample Measurement' -> 'MEAN_Sample_Measurement')"},

{'type': 'esriJobMessageTypeInformative',

'description': '[DEBUG|07:49:54] Writing to managed data store (datastore=SpatiotemporalDataStore,dataset=gaxc1b7671cda424b938658a0e307cf97ba)'},

{'type': 'esriJobMessageTypeInformative',

'description': '[DEBUG|07:49:54] Discovered 1 ES machine(s). Setting result shard count to 6'},

{'type': 'esriJobMessageTypeInformative',

'description': '[DEBUG|07:49:54] Creating data source: CreateRequest(gaxc1b7671cda424b938658a0e307cf97ba,esriGeometryPolygon,BDSSchema([Lcom.esri.arcgis.bds.BDSField;@34ff4b79,Shape,null,null,null),0,6,60,esriTimeUnitsSeconds,true,false,true,0.0,ObjectId64Bit,Yearly,0,1000,OBJECTID,globalid,-1,[Lcom.esri.arcgis.bds.EsriGeoHash;@5a9a044e,false,1,Months,10000,false,50m,0.025,)'},

{'type': 'esriJobMessageTypeInformative',

'description': '{"messageCode":"BD_101028","message":"Starting new distributed job with 13770 tasks.","params":{"totalTasks":"13770"}}'},

{'type': 'esriJobMessageTypeInformative',

'description': '[DEBUG|07:49:54] [Spark] Job has 5 stages'},

{'type': 'esriJobMessageTypeInformative',

'description': "[DEBUG|07:49:54] [Spark] Submitted stage 'flatMap at SummarySpatialJoin.scala:190' with 6 tasks (attempt=0)"},

{'type': 'esriJobMessageTypeInformative',

'description': '{"messageCode":"BD_101029","message":"0/13770 distributed tasks completed.","params":{"completedTasks":"0","totalTasks":"13770"}}'},

{'type': 'esriJobMessageTypeInformative',

'description': "[DEBUG|07:49:55] [Spark] Submitted stage 'flatMap at SummarySpatialJoin.scala:140' with 1147 tasks (attempt=0)"},

{'type': 'esriJobMessageTypeInformative',

'description': "[DEBUG|07:49:57] [Spark] Completed stage 'flatMap at SummarySpatialJoin.scala:140' (attempt=0,gc-time=274,disk-spill=0b,cpu-time=4876253908ns)"},

{'type': 'esriJobMessageTypeInformative',

'description': '{"messageCode":"BD_101029","message":"1152/13770 distributed tasks completed.","params":{"completedTasks":"1152","totalTasks":"13770"}}'},

{'type': 'esriJobMessageTypeInformative',

'description': "[DEBUG|07:50:14] [Spark] Completed stage 'flatMap at SummarySpatialJoin.scala:190' (attempt=0,gc-time=176,disk-spill=0b,cpu-time=6587011552ns)"},

{'type': 'esriJobMessageTypeInformative',

'description': "[DEBUG|07:50:14] [Spark] Submitted stage 'flatMap at SummarySpatialJoin.scala:199' with 5735 tasks (attempt=0)"},

{'type': 'esriJobMessageTypeInformative',

'description': '{"messageCode":"BD_101029","message":"4675/13770 distributed tasks completed.","params":{"completedTasks":"4675","totalTasks":"13770"}}'},

{'type': 'esriJobMessageTypeInformative',

'description': "[DEBUG|07:50:21] [Spark] Completed stage 'flatMap at SummarySpatialJoin.scala:199' (attempt=0,gc-time=198,disk-spill=0b,cpu-time=21348273576ns)"},

{'type': 'esriJobMessageTypeInformative',

'description': "[DEBUG|07:50:21] [Spark] Submitted stage 'runJob at EsSpark.scala:108' with 5735 tasks (attempt=0)"},

{'type': 'esriJobMessageTypeInformative',

'description': '{"messageCode":"BD_101029","message":"7510/13770 distributed tasks completed.","params":{"completedTasks":"7510","totalTasks":"13770"}}'},

{'type': 'esriJobMessageTypeInformative',

'description': '{"messageCode":"BD_101029","message":"9740/13770 distributed tasks completed.","params":{"completedTasks":"9740","totalTasks":"13770"}}'},

{'type': 'esriJobMessageTypeInformative',

'description': '{"messageCode":"BD_101029","message":"12090/13770 distributed tasks completed.","params":{"completedTasks":"12090","totalTasks":"13770"}}'},

{'type': 'esriJobMessageTypeInformative',

'description': "[DEBUG|07:50:35] [Spark] Completed stage 'runJob at EsSpark.scala:108' (attempt=0,gc-time=838,disk-spill=0b,cpu-time=42588676703ns)"},

{'type': 'esriJobMessageTypeInformative',

'description': '[DEBUG|07:50:35] Updating metadata properties for gaxc1b7671cda424b938658a0e307cf97ba after write'},

{'type': 'esriJobMessageTypeInformative',

'description': '[DEBUG|07:50:35] Results written in 41067ms (WriteResult(None,None,0,0))'},

{'type': 'esriJobMessageTypeInformative',

'description': '{"messageCode":"BD_101081","message":"Finished writing results:"}'},

{'type': 'esriJobMessageTypeInformative',

'description': '{"messageCode":"BD_101082","message":"* Count of features = 0","params":{"resultCount":"0"}}'},

{'type': 'esriJobMessageTypeInformative',

'description': '{"messageCode":"BD_101083","message":"* Spatial extent = None","params":{"extent":"None"}}'},

{'type': 'esriJobMessageTypeInformative',

'description': '{"messageCode":"BD_101084","message":"* Temporal extent = None","params":{"extent":"None"}}'},

{'type': 'esriJobMessageTypeWarning',

'description': '{"messageCode":"BD_101010","message":"The result of your analysis did not return any features. No layer will be created."}'},

{'type': 'esriJobMessageTypeInformative',

'description': '[DEBUG|07:50:35] Removing empty dataset from datastore'},

{'type': 'esriJobMessageTypeInformative',

'description': '[DEBUG|07:50:35] Running cleanup tasks for conditions (Success,Complete)'},

{'type': 'esriJobMessageTypeInformative',

'description': '[DEBUG|07:50:35] Running cleanup task [? @ ManagedLayerResultManager.scala:154].'},

{'type': 'esriJobMessageTypeInformative',

'description': '[DEBUG|07:50:35] No non-empty layers have been added, removing Portal item'},

{'type': 'esriJobMessageTypeInformative',

'description': '[ERROR|07:50:35] Cleanup task [? @ ManagedLayerResultManager.scala:154] completed with failure: Server returned error: [Unable to delete item: https://pythonapi.playground.esri.com/server/rest/services/Hosted/average_pm_by_county/FeatureServer]'},

{'type': 'esriJobMessageTypeInformative',

'description': '[DEBUG|07:50:35] Cleanup task [? @ ManagedLayerResultManager.scala:154] completed with failure: Server returned error: [Unable to delete item: https://pythonapi.playground.esri.com/server/rest/services/Hosted/average_pm_by_county/FeatureServer]'},

{'type': 'esriJobMessageTypeInformative',

'description': 'Server returned error: [Unable to delete item: https://pythonapi.playground.esri.com/server/rest/services/Hosted/average_pm_by_county/FeatureServer]'},

{'type': 'esriJobMessageTypeInformative',

'description': '[DEBUG]com.esri.arcgis.gae.ags.RestUtil$ServerException: Server returned error: [Unable to delete item: https://pythonapi.playground.esri.com/server/rest/services/Hosted/average_pm_by_county/FeatureServer]\n\tat com.esri.arcgis.gae.ags.RestUtil$.assertResponseSuccess(RestUtil.scala:227)\n\tat com.esri.arcgis.gae.ags.PortalClient.deleteItem(PortalClient.scala:177)\n\tat com.esri.arcgis.gae.ags.layer.output.ManagedLayerResultManager$$anonfun$createResultServiceInPortal$2.apply$mcV$sp(ManagedLayerResultManager.scala:157)\n\tat com.esri.arcgis.gae.ags.layer.output.ManagedLayerResultManager$$anonfun$createResultServiceInPortal$2.apply(ManagedLayerResultManager.scala:155)\n\tat com.esri.arcgis.gae.ags.layer.output.ManagedLayerResultManager$$anonfun$createResultServiceInPortal$2.apply(ManagedLayerResultManager.scala:155)\n\tat com.esri.arcgis.gae.fn.api.v1.GenericCleanupManager$$anonfun$runTasks$3.apply(CleanupManager.scala:146)\n\tat com.esri.arcgis.gae.fn.api.v1.GenericCleanupManager$$anonfun$runTasks$3.apply(CleanupManager.scala:141)\n\tat scala.collection.mutable.ResizableArray$class.foreach(ResizableArray.scala:59)\n\tat scala.collection.mutable.ArrayBuffer.foreach(ArrayBuffer.scala:48)\n\tat com.esri.arcgis.gae.fn.api.v1.GenericCleanupManager.runTasks(CleanupManager.scala:141)\n\tat com.esri.arcgis.gae.fn.api.v1.GenericCleanupManager.runCleanupForSuccess(CleanupManager.scala:99)\n\tat com.esri.arcgis.gae.fn.api.v1.GenericCleanupManager.monitor(CleanupManager.scala:116)\n\tat com.esri.arcgis.gae.ags.package$LayerOutputOps.saveAsLayer(package.scala:140)\n\tat com.esri.arcgis.gae.ags.package$LayerOutputOps.saveToWebGIS(package.scala:124)\n\tat com.esri.arcgis.gae.ags.layer.df.WebGISProvider.createRelation(WebGISProvider.scala:50)\n\tat org.apache.spark.sql.execution.datasources.SaveIntoDataSourceCommand.run(SaveIntoDataSourceCommand.scala:45)\n\tat org.apache.spark.sql.execution.command.ExecutedCommandExec.sideEffectResult$lzycompute(commands.scala:70)\n\tat org.apache.spark.sql.execution.command.ExecutedCommandExec.sideEffectResult(commands.scala:68)\n\tat org.apache.spark.sql.execution.command.ExecutedCommandExec.doExecute(commands.scala:86)\n\tat org.apache.spark.sql.execution.SparkPlan$$anonfun$execute$1.apply(SparkPlan.scala:131)\n\tat org.apache.spark.sql.execution.SparkPlan$$anonfun$execute$1.apply(SparkPlan.scala:127)\n\tat org.apache.spark.sql.execution.SparkPlan$$anonfun$executeQuery$1.apply(SparkPlan.scala:155)\n\tat org.apache.spark.rdd.RDDOperationScope$.withScope(RDDOperationScope.scala:151)\n\tat org.apache.spark.sql.execution.SparkPlan.executeQuery(SparkPlan.scala:152)\n\tat org.apache.spark.sql.execution.SparkPlan.execute(SparkPlan.scala:127)\n\tat org.apache.spark.sql.execution.QueryExecution.toRdd$lzycompute(QueryExecution.scala:80)\n\tat org.apache.spark.sql.execution.QueryExecution.toRdd(QueryExecution.scala:80)\n\tat org.apache.spark.sql.DataFrameWriter$$anonfun$runCommand$1.apply(DataFrameWriter.scala:676)\n\tat org.apache.spark.sql.DataFrameWriter$$anonfun$runCommand$1.apply(DataFrameWriter.scala:676)\n\tat org.apache.spark.sql.execution.SQLExecution$$anonfun$withNewExecutionId$1.apply(SQLExecution.scala:78)\n\tat org.apache.spark.sql.execution.SQLExecution$.withSQLConfPropagated(SQLExecution.scala:125)\n\tat org.apache.spark.sql.execution.SQLExecution$.withNewExecutionId(SQLExecution.scala:73)\n\tat org.apache.spark.sql.DataFrameWriter.runCommand(DataFrameWriter.scala:676)\n\tat org.apache.spark.sql.DataFrameWriter.saveToV1Source(DataFrameWriter.scala:285)\n\tat org.apache.spark.sql.DataFrameWriter.save(DataFrameWriter.scala:271)\n\tat org.apache.spark.sql.DataFrameWriter.save(DataFrameWriter.scala:229)\n\tat sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)\n\tat sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)\n\tat sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)\n\tat java.lang.reflect.Method.invoke(Method.java:498)\n\tat py4j.reflection.MethodInvoker.invoke(MethodInvoker.java:244)\n\tat py4j.reflection.ReflectionEngine.invoke(ReflectionEngine.java:357)\n\tat py4j.Gateway.invoke(Gateway.java:282)\n\tat py4j.commands.AbstractCommand.invokeMethod(AbstractCommand.java:132)\n\tat py4j.commands.CallCommand.execute(CallCommand.java:79)\n\tat py4j.GatewayConnection.run(GatewayConnection.java:238)\n\tat java.lang.Thread.run(Thread.java:748)\n'},

{'type': 'esriJobMessageTypeInformative',

'description': "[DEBUG|07:50:35] Running cleanup task ['Maybe update portal item data type keyword' @ ManagedLayerResultManager.scala:392]."},

{'type': 'esriJobMessageTypeInformative',

'description': "[ERROR|07:50:35] CLEANUP_TASK_FAILED taskName -> 'Maybe update portal item data type keyword' @ ManagedLayerResultManager.scala:392,reason -> null"},

{'type': 'esriJobMessageTypeInformative',

'description': "[DEBUG|07:50:35] CLEANUP_TASK_FAILED taskName -> 'Maybe update portal item data type keyword' @ ManagedLayerResultManager.scala:392,reason -> null"},

{'type': 'esriJobMessageTypeInformative', 'description': ''},

{'type': 'esriJobMessageTypeInformative',

'description': '[DEBUG]scala.runtime.NonLocalReturnControl$mcV$sp\n'},

{'type': 'esriJobMessageTypeInformative',

'description': '[DEBUG|07:50:35] Running cleanup task [? @ LayerResultUtil.scala:134].'},

{'type': 'esriJobMessageTypeInformative',

'description': '[DEBUG|07:50:35] Cleanup task [? @ LayerResultUtil.scala:134] completed successfully.'},

{'type': 'esriJobMessageTypeInformative',

'description': '[DEBUG|07:50:36] User script completed'},

{'type': 'esriJobMessageTypeInformative',

'description': '[DEBUG|07:50:38] Running cleanup tasks for conditions (Success,Complete)'},

{'type': 'esriJobMessageTypeInformative',

'description': '[DEBUG|07:50:38] Running cleanup task [? @ DebugUtil.scala:52].'},

{'type': 'esriJobMessageTypeWarning',

'description': 'Detaching log redirect'},

{'type': 'esriJobMessageTypeInformative',

'description': 'Succeeded at Wed Jun 30 07:50:38 2021 (Elapsed Time: 6 minutes 3 seconds)'}]In this guide, we learned how we can manage our tabular and geographic big data. In the next guide, we will learn about the run_python_script tool.