Apache ORC (Optimized Row Columnar) is an open-source type-aware columnar

file format commonly used in Hadoop ecosystems. The ORC file format (.orc) is self-describing, as in it optimizes large

streaming reads but also integrates support for finding required rows quickly. Because of this, ORC takes significantly

less time to read in data and can reduce the size of data on disk. Additionally, ORC supports complex types of data such

as structs, lists, maps, and unions. ORC is supported natively in Spark and in Hive.

To learn more about ORC, see the ORC specification.

To learn more about using ORC with Spark and Hive, see the Spark documentation on ORC files.

After you load one or more ORC files as a Spark DataFrame, you can create a geometry column and define its spatial reference using GeoAnalytics for Microsoft Fabric SQL functions. For example, if you had polygons stored as WKT strings you could call ST_PointFromText to create a point column from a string column and set its spatial reference. For more information see Geometry.

After creating the geometry column and defining its spatial reference, you can perform spatial analysis and visualization using the SQL functions and tools available in GeoAnalytics for Microsoft Fabric. You can also export a Spark DataFrame to ORC files for data storage or export to other systems.

Reading ORC Files in Spark

# Option 1

df = spark.read.orc("path/to/your/file.orc")

# Option 2

df = spark.read.format("orc").load("path/to/your/file.orc")Options for reading ORC files

Here are some common options used in reading ORC files -

| DataFrameReader option | Example | Description |

|---|---|---|

recursive | .option("recursive | Recursively look though ORC files under the given directory. |

merge | .option("merge | Merge the schemas of a collection of ORC datasets in the input directory. |

path | .option("path | Read in files with the specified name pattern under the given file path. |

For a complete list of options that can be used with the ORC data source in Spark, see the Spark documentation.

Specifying a Schema

To define the schema explicitly, you can use Struct and Struct in both PySpark and Scala.

from pyspark.sql.types import StructType, StructField, StringType, IntegerType

schema = StructType([

StructField("name", StringType(), True),

StructField("age", IntegerType(), True),

StructField("city", StringType(), True)

])

df = spark.read.schema(schema).orc("path/to/your/file.orc")Reading multiple ORC files

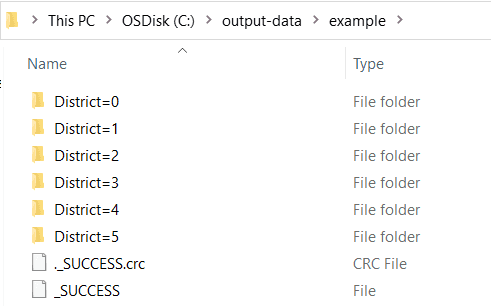

Spark can infer partition columns from directory names that follow the column=value format. For instance, if your ORC

files are organized in subdirectories named District=0, District=2, etc., Spark will recognize district as a

partition column when reading the data. This allows Spark to optimize query performance by reading only the relevant partitions.

When reading a directory of ORC data with subdirectories not named with column=, Spark won't read from the

subdirectories in bulk. You must add the glob pattern at the end of the root path.

Note that all subdirectories must contain ORC files in order to be read in bulk.

df = spark.read.orc("path/to/your/files/*.orc")Writing DataFrames to ORC

# Option 1

df.write.orc("path/to/output/directory")

# Option 2

df.write.format("orc").save("path/to/output/directory")Here are some common options used in writing ORC files in Spark:

| DataFrameWriter option | Example | Description |

|---|---|---|

partition | .partition | Partition the output by the given column name. This example will partition the output ORC files by values in the date column. |

overwrite | .mode("overwrite") | Overwrite existing data in the specified path. Other available options are append,error,and ignore. |

Usage notes

- The ORC data source doesn't support loading or saving DataFrames containing

point,line,polygon, orgeometrycolumns. - The spatial reference of a geometry column always needs to be set when importing geometry data from ORC files.

- Consider explicitly saving the spatial reference in the ORC as a column or in the schema in the geometry column name. To read more on the best practices of working with spatial references in DataFrames, see the documentation on Coordinate Systems and Transformations.

- Be careful when saving to one ORC file using

.coalesce(1)with large datasets. Consider partitioning large data by a certain attribute column to easily read and filter subdirectories of data and to improve performance.